A new generation of personal assistants promise to change the way we live. Apps such as Apple’s Siri, Amazon’s Alexa, Microsoft’s Cortana and Alphabet Inc.’s Google Assistant might live up to this promise, provided they work the way they are supposed to — but do they?

This is vital since around 60% of voice-controlled digital devices get asked fairly common questions of their personal assistants, while 57% of users want to know what the weather will be. Around 54% of them use speech recognition to control music streaming.

To see how the different personal assistants compare with each other, digital marketing firm Stone Temple recently gathered a collection of 5,000 different questions associated with popular facts and asked Apple’s Siri and other personal assistants.

Below readers can see how Siri, Alexa, Google Assistant and Cortana performed in the quiz. The first number is the percentage of questions to which a reply was received, and the second one is the percentage of complete and correct answers.

- Google Assistant: 68% answered; 90.6% correct

- Cortana: 56.5% answered; 81.9% correct

- Alexa: 20.7% answered; 87% correct

- Siri: 21.7% answered; 62.2% correct

Google Search responded 74.3% of the time, while 97.4% of its answers were complete and correct.

When it comes to incorrect answers, Apple’s Siri lead the pack with 3% of its answers being just plain wrong.

Stone Temple reached the conclusion that Google Search and Google Assistant on Google’s home page were still clearly superior, but that Cortana was busy closing the gap all the time — having made great progress over the last three years.

The report continued by saying that Siri and Alexa both still faced the hurdle of being unable to benefit from a full web crawl to improve the results from their own databases.

Before you go

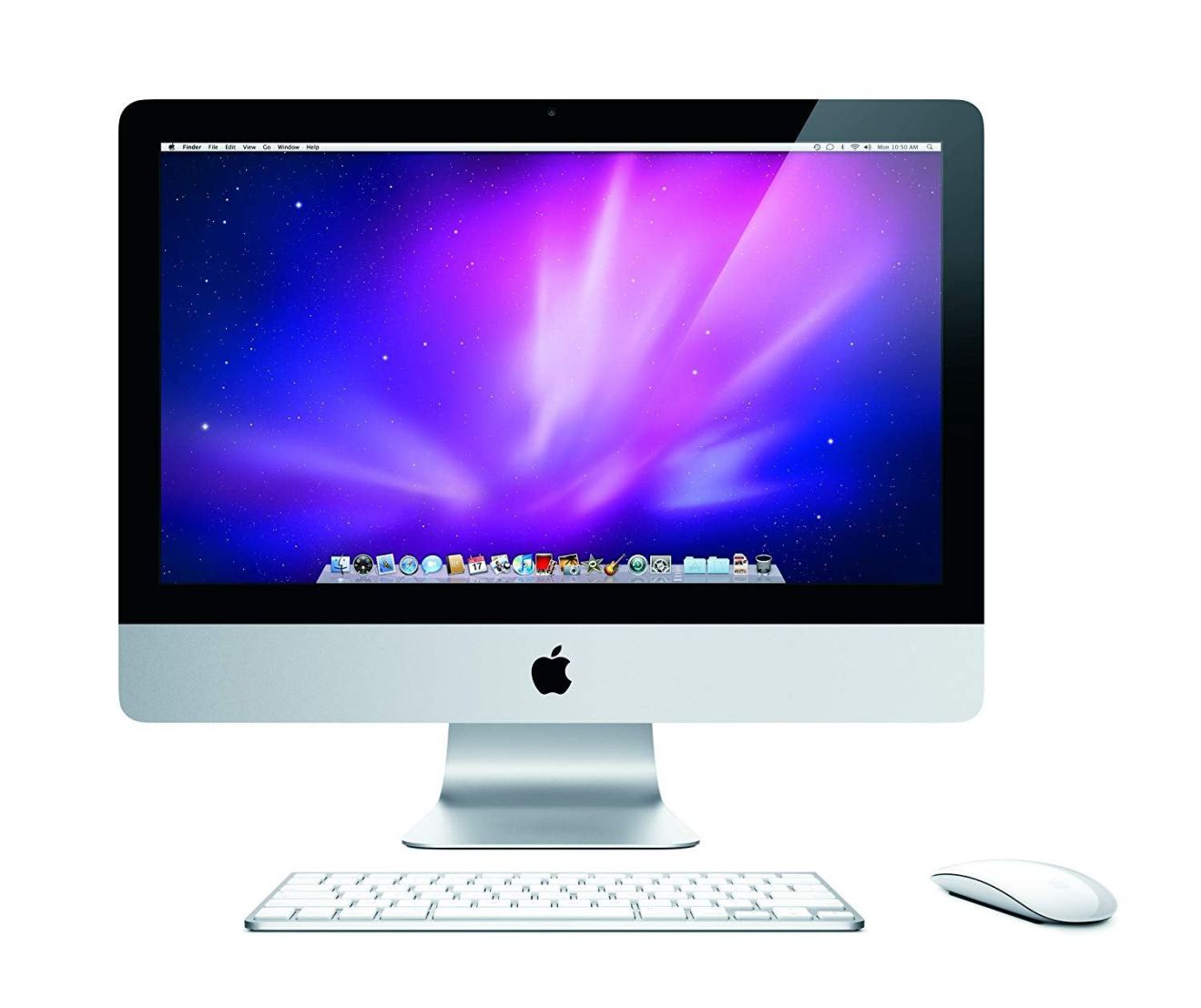

After spending over 20 years working with Macs, both old and new, theres a tool I think would be useful to every Mac owner who is experiencing performance issues.

CleanMyMac is highest rated all-round cleaning app for the Mac, it can quickly diagnose and solve a whole plethora of common (but sometimes tedious to fix) issues at the click of a button. It also just happens to resolve many of the issues covered in the speed up section of this site, so Download CleanMyMac to get your Mac back up to speed today.

This study confirms my simple experiments which did not include amazon. Aside from Google advantage of its amazing database it comes down to priorities. The finders of the AI company bought by Apple have indicated their disappointment more than once at Apple’s failure to develop the routines. This worries me major for the future because it is software and specifically AI that will determine the next generation of big winners not hardware