Everyone familiar with video chat via FaceTime will know that it can be hard to keep eye contact while looking at the face of the individual you are talking to on the screen. This is, of course, because you have to look straight into the camera to make eye contact, not at the other person’s on-screen face.

Podcast co-host Will Sigmon and app designer Mike Rundle recently tweeted, however, that Apple seems to have resolved this issue in iOS 13 by introducing a feature known as FaceTime Attention Correction. According to Rundle and Sigmon, the feature in fact works – and Rundle even posted a screenshot to confirm this.

He tweeted: “Looking at him on-screen (not at the camera) produces a picture of me looking dead at his eyes like I was staring into the camera.”

At this stage, the feature only appears to be available in the iOS 13 beta (developer version) since it does not show up in the public beta version’s FaceTime settings.

At press time, Apple had not yet responded to requests for confirmation and more details on how FaceTime Attention Correction works. However, the co-founder of Observant, a business that produces software that monitors drivers to ensure they are keeping their eyes on the road, tweeted that Apple is using ARKit to compile a depth map of the phone user’s face and then adjusting their eyes as necessary.

FaceTime Attention Correction might find its way to all iPhones when iOS 13 is released later in 2019, along with other (already confirmed) features such as Dark Mode and redesigned versions of apps such as Photos, Reminders and Apple Maps.

Apple normally plans the release of new iOS versions to coincide with the launch of new iPhone models but, until now, it has not said anything about release dates beyond autumn 2019.

Before you go

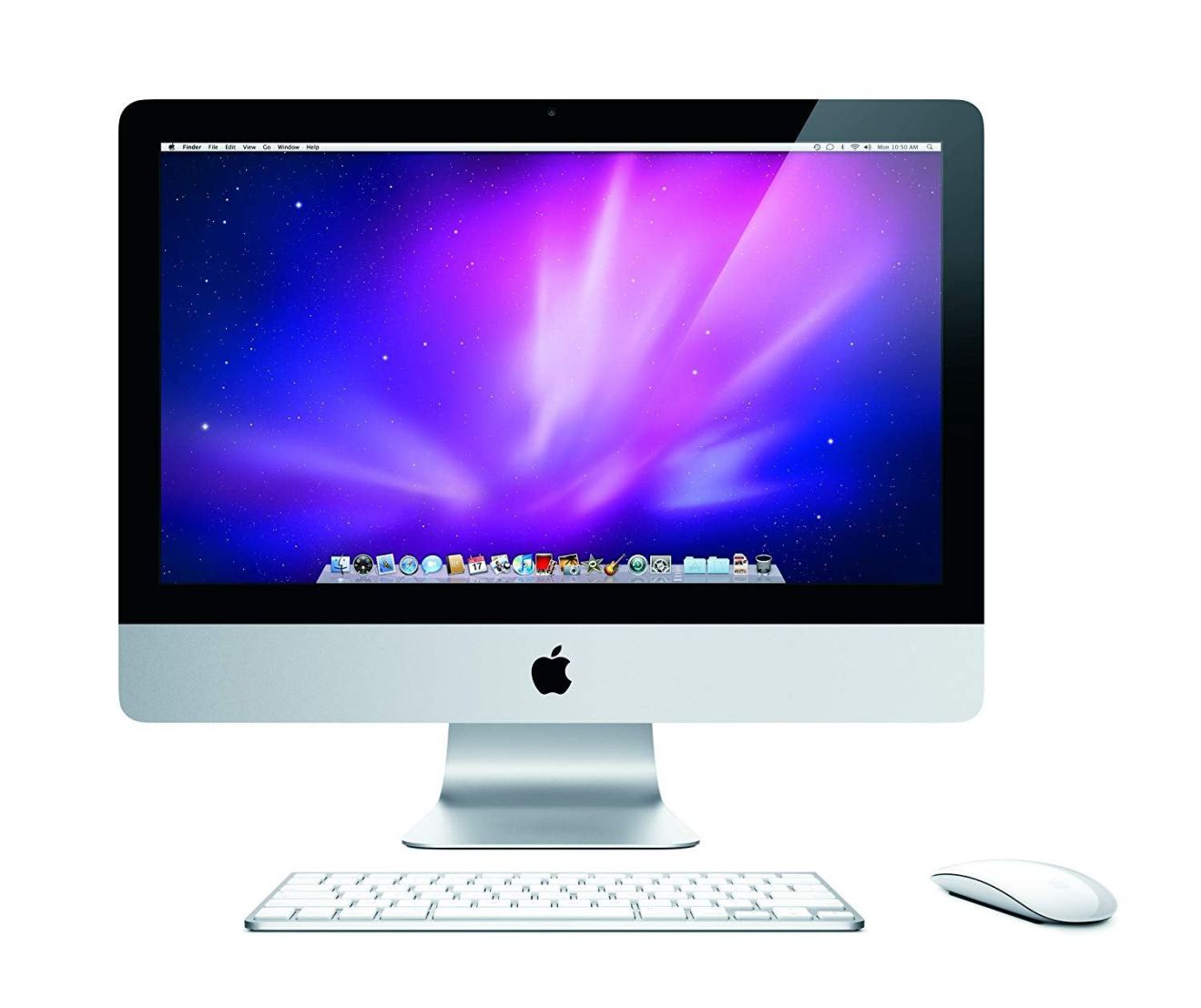

After spending over 20 years working with Macs, both old and new, theres a tool I think would be useful to every Mac owner who is experiencing performance issues.

CleanMyMac is highest rated all-round cleaning app for the Mac, it can quickly diagnose and solve a whole plethora of common (but sometimes tedious to fix) issues at the click of a button. It also just happens to resolve many of the issues covered in the speed up section of this site, so Download CleanMyMac to get your Mac back up to speed today.

Add Comment