In the run-up to the release of iOS11 later this year, Apple has published a study revealing how it is using machine learning to make its digital voice assistant Siri sound more human.

Apart from capturing many hours of top-quality audio than can be analysed by the app to produce voice responses, the developer’s biggest challenge was to get the intonation and stress patterns in spoken language perfectly replicated. This was exacerbated by the fact that this type of process can place huge demand on a device’s processor, which means that even a relatively straightforward method of combining sounds to form words and sentences could prove to be too resource-intensive.

This is where machine learning proved invaluable. With sufficient amounts of training, it can assist any text-to-speech application to better understand how to choose audio segments that go well together to produce human-sounding replies.

In the case of iOS 11, Apple’s engineers hired a female voice actor and went on to record around 20 hours of speech. Using these recordings, they created as many as two million audio snippets, which were subsequently utilised to help train a deep learning system.

In the paper, the researchers point out that a test audience expressed a strong preference for the latest version of Apple’s voice assistant over the old one used in iOS9.

The result is a huge improvement in Siri’s response to general knowledge questions, navigation instructions and notifications about completed requests, with the AI app sounding significantly less robotic than its 2015 counterpart.

So, that is at least one more cool feature to look forward to in iOS 11.

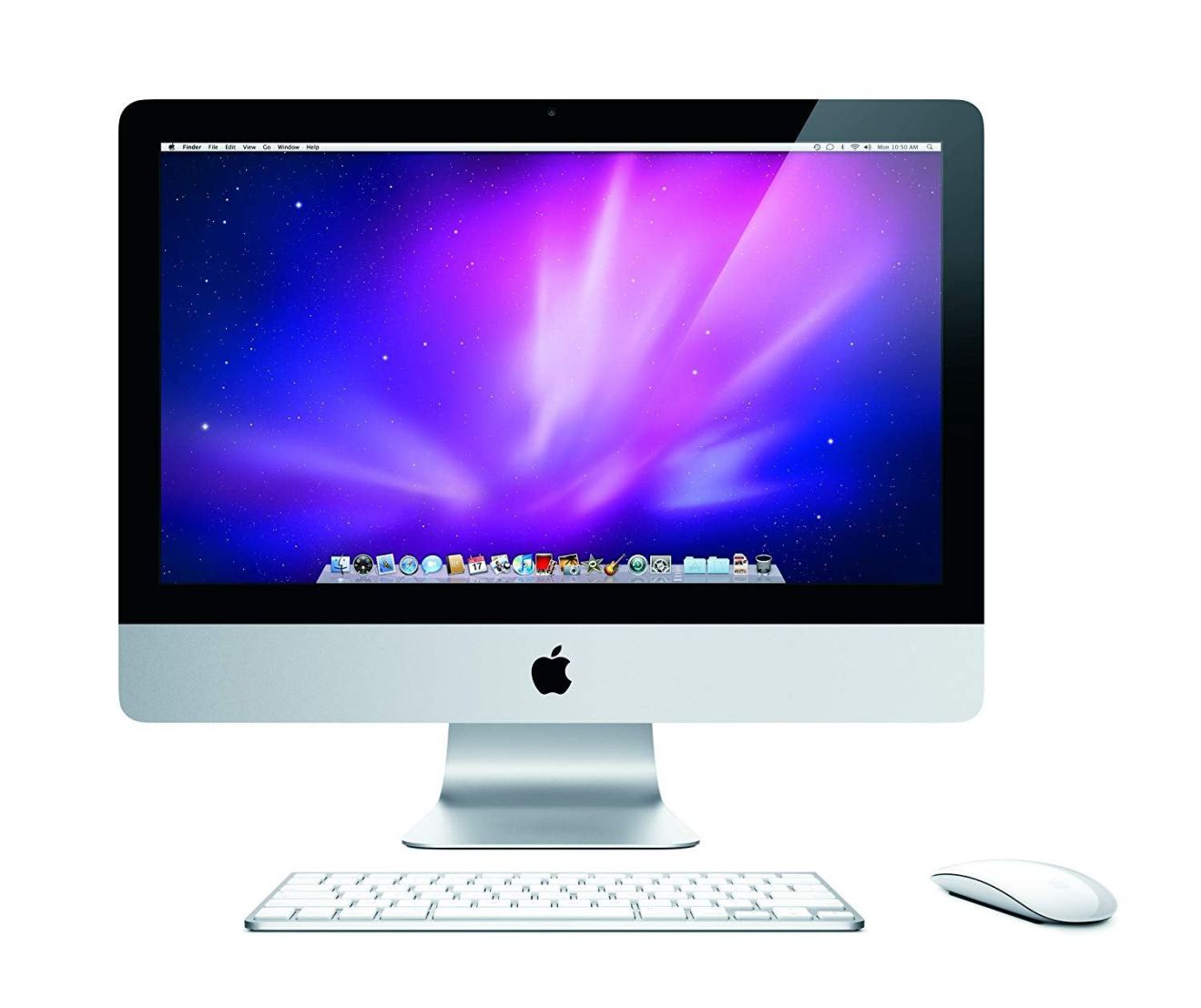

In case you don’t have an iPhone, Siri is also available on Mac and is much easier to set up than you might think.

Before you go

After spending over 20 years working with Macs, both old and new, theres a tool I think would be useful to every Mac owner who is experiencing performance issues.

CleanMyMac is highest rated all-round cleaning app for the Mac, it can quickly diagnose and solve a whole plethora of common (but sometimes tedious to fix) issues at the click of a button. It also just happens to resolve many of the issues covered in the speed up section of this site, so Download CleanMyMac to get your Mac back up to speed today.

Add Comment